Founder of School of Troubleshooters Oleg Braginsky and student Konstantin Alekseev figured out what should be the processes of the creative department by improving its efficiency, and mapping connections and their interactions. The resulting picture led to the structured and staged plan listing upcoming changes.

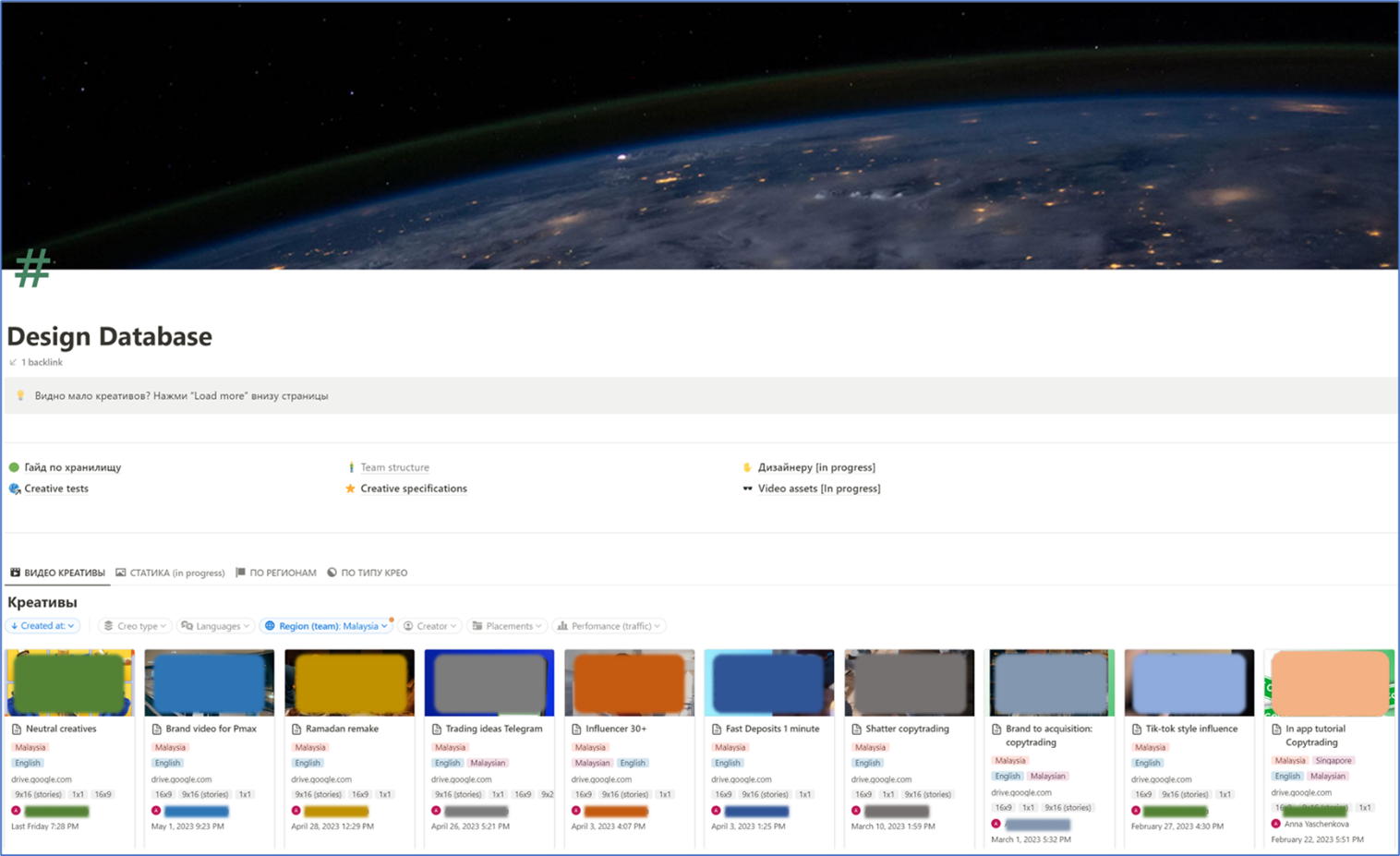

Developing of a creative database

The marketing department consisted of regional teams responsible for the user’s acquisition. The producing and storing of the ideas was implemented in a form that was familiar, but not optimal for the participants of the process. Historically established approach led to misunderstanding and confusion:

1. How to understand which creative is the most successful in terms of attracting users?

2. How to form new hypotheses for the content and do not use same ones twice?

3. How to define the correct file sizes and source materials.

4. How to view the total amount of used materials in each region of presence?

The answers to these questions were various and contradictive. The solution was to consolidate all of the creatives into a single database. After assessing the risks and evaluating the implementation costs, we chose Notion, described the process and confirmed it with key stakeholder: designers and marketers:

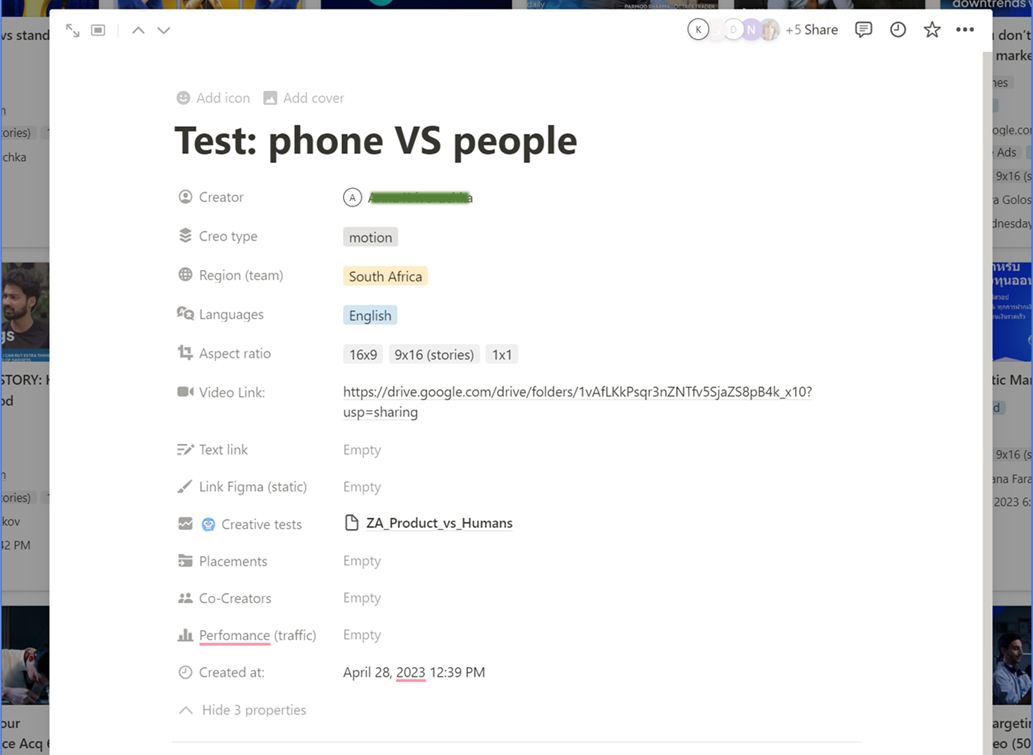

The card with the creatives had the following view:

Creative’s name format

Introduced the numbering system for naming each unit. Took a digital code starting with "001". In the case of versioning, the value was modified to "001-01". The name of the insight indicated the language, format, duration, and resolution. Example looks like EN_video_30sec_ID001-01_16x9. We did the same with static banners.

Mapping hypotheses

Production was like working amongst chaotically generated assumptions. We removed the need of asking the same question “Have we already tried this?” over and over again by creating a scheme of validated hypothesis, that were based on product research, and interviews with existing and potential customers.

It was quite an exercise to collect, clean, group and organize all of the necessary data points on Miro board. As a result, the ideation team working with the bank of the ideas gained an ability to quickly understand, evaluate and validate what was previously been tested and accomplished without repeating previous work.

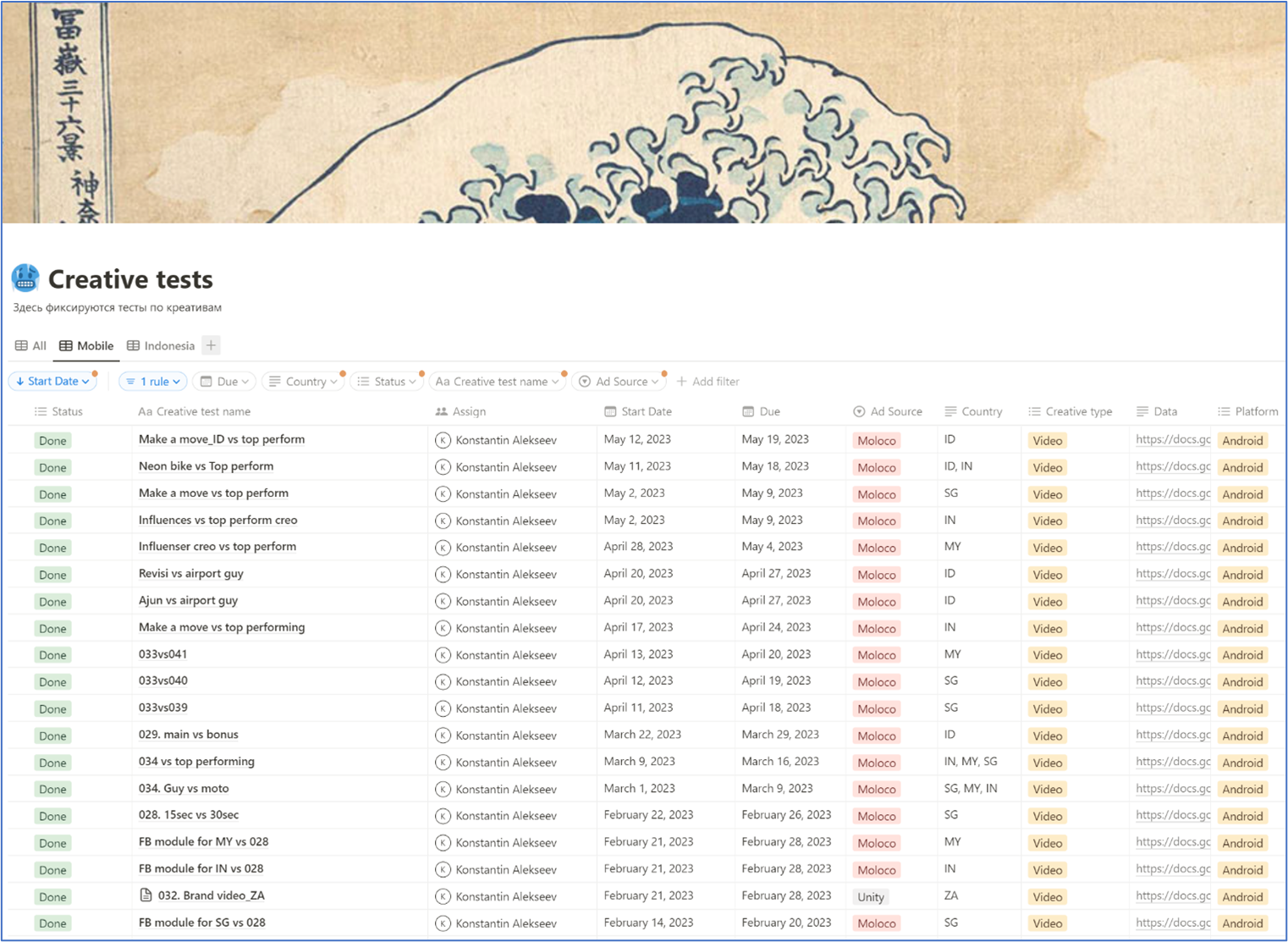

Test database design

A/B experiments were stored in Notion by creating pages, integrated with the base of creatives. The ability to get to the tested insight was added to the card. We agreed on the process at the level of traffic managers, fine-tuned the connection and began to observe the appearance of information.

As the result, marketing manager could look at the hypotheses being tested by seeing the output:

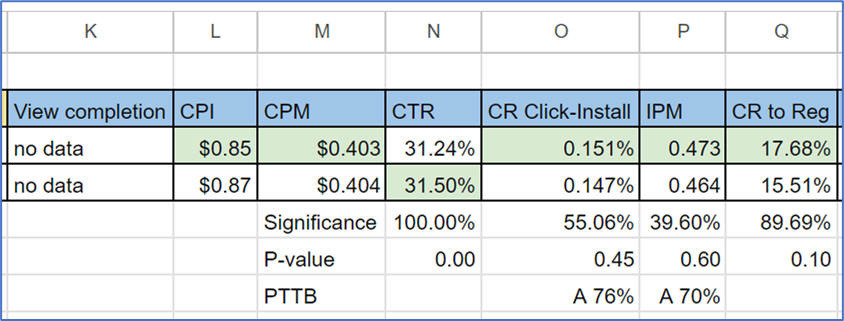

Checking the output

It was not enough to collect test scores only. There was an additional requirement to calculate the usefulness, defining whether team could get a valuable insight from the experiment. The evaluation was based on a statistical significance calculator and a P-value test, used the Stats Machina extension:

Testing for the statistical significance mostly requires tons of the initial data. We adopted the Bayesian method and used it while assuming the significance of duration of the calculations. We integrated the Calculator APIs with Google Spreadsheets and got the result as if we were using a Chrome extension:

Test Conclusions

Even though the data table content has become much clear to marketers, we asked ourselves – how about people who are unfamiliar with the metrics? We added more fields: “conclusion”, “meaning” which added more clarity. This saved a significant amount of time on helping to explain the experiment to our peers.

Brand code

During the process of generating creative ideas, we encountered the fact that the company had a unique code and rules for prohibited advertising messages. We gathered the documentation, leaving a link for everyone, then created a team of reviewers who could evaluate the upcoming creative at any time.

Timely filing of the creative base

Despite the confirmed process, some of the materials did not make it into the database. Versions of creatives or short influencer’s cuts were scattered across the folders of team members. We elaborated the importance of a consistency of the database to the rest of the company, gently destroying ivory towers.

An additional meeting with the designers helped resolve issues and pacify differences.

Minority and majority of hypotheses

The teams began to generate hypotheses for testing, and the question of evaluation arose on the agenda. We proposed to adopt the weighing methodology called RICE introduced by the product team of Intercom, which consists of four parts: Reach for the audience, Impact on business, Confidence and estimated Effort.

We introduced score system from 0 to 3 for each component to turn the integral indicator into a rating that transforms into a priority. Discussions with traffic managers helped to align, discuss options for hypotheses for initial training, and develop a plan work for the coming quarters.

Creativity also requires discipline! Let us know what your thoughts are.